|

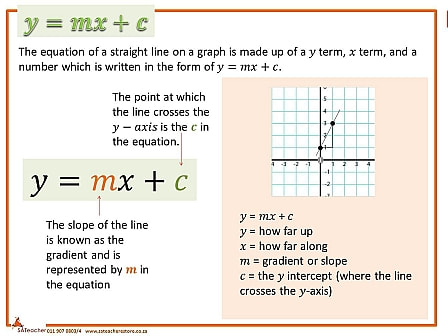

Linear regression, is regarded as "HELLO WORLD! " of machine learning. If you are new to computer science stuff, Hello World! is the first program you write to get a gist of how comfortable you are with the programming language. In general it is the first step you take when learning something. Now coming to linear regression, it is a simple supervised learning technique to find the best trendline to describe a dataset. This post tells you why you are doing what you are doing! Simple linear regression lives up to its name: it is a very straight forward simple linear approach for predicting a quantitative response Y on the basis of a single predictor variable X. Y ≈ w0 + w1 * X. where X = predictor vector in the dataset Y = the already predicted vector for some X in the dataset W = weight vector = [w0 w1] So basically, X, Y, W are vectors lets say, X= ( x1, x2, x3.......xn) Y=( y1, y2, y3........yn) W=( w0, w1, w2.....wn) ([ w0 in W is called as the bias weight, which simply means error that is introduced by approximating real life problems]) And also (x1,y1) ,(x2,y2), (x3,y3).....(xn,yn) are called training pairs. Upon looking closely we see that it resembles to the line equation y = mx+ c Now suppose you have a clean data set and you ran the linear regression algorithm, and you get some output lets name the vector, Y' .

EXAMPLE: For example, suppose you have a faulty weighing machine which says you are 170lbs but you already know you are only 150lbs. In this scenario, the weight you actually are 150lbs is Y , the weight you predicted, 170lbs is Y' and the error is +20lbs because you are 20 pounds greater than what you actually weigh. You get that error by subtracting 170 and 150. This error or error term in machine learning is known as COST FUNCTION ( denoted by 'J' ) or loss function or residual. Generally it is r(x,y) = h(x) = J = Y'-Y where r(x,y) is called Residual h(x) is called Hypothesis Function J is called Cost Function That's the predicted value - the output you already have in the dataset. Remember in unsupervised learning we will not have this Y' because we have unlabelled data. Now lets breathe, that's a lot of information to take in. I spent a lot of time to explain this because if you know linear regression very well, you can pretty much understand anything else. In the next post I will continue linear regression algorithm and what are the variables to be considered when performing linear regression, its disadvantages etc., Peace.

0 Comments

Leave a Reply. |